Chapter 67: Light Theory

Our goal is to answer “why is ray tracing an OK renderer?”

Radiometry

Consider light to be photons flying through space. A photon is an elementary particle - the smallest measurement of electromagnetic radiation a.k.a. light.

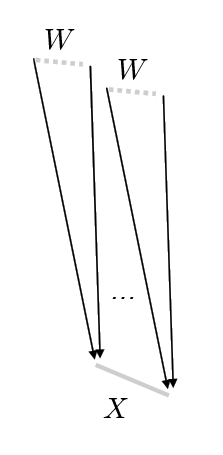

We imagine that our scene is in a steady state. In order to measure light, we can build a sensor in space, with a physical extent (area) .

Using this sensor we can count the number of photons that pass through for the directions of the wedge .

Photons carry some amount of energy measured in joules. One joule is the energy required to lift a one kilogram object 10cm.

We can measure “how much energy is collected for a given time” by dividing by time. A watt is one joule per second.

Radiant Flux

Radiant flux (measured in watts) is the total amount of light energy passing through a surface or region of space per unit time.

Radiant flux is often denoted or for some sensor and wedge . The wedge is a solid angle, or three dimensional angle (measured in steradians instead of radians) that describes the direction in which we are measuring light. Often the wedge in question is the entire upper hemisphere above the surface we are concerned with.

For a given wedge , the solid angle is the area of wedge on a unit sphere. Solid angles are measured in steradians - solid angle of a sphere is . A hemisphere subtends .

If we assume that the radiant flux varies continuously as the geometry of the sensor is altered, we can use this concept to define other concepts that will be more useful to us.

Note that the total flux measured over a sphere and a larger encompassing sphere are the same, however, less energy is passing through each small area of than .

Energy falls off with a factor of

which explains why the amount of energy falls off with distance-squared to light.

We want to get as close as we can to talking about light for a given ray - what’s the difference between that and radiant flux? Radiant flux is a measure over a region of space and an orientation in space - but we always talk about points, not regions.

Irradiance

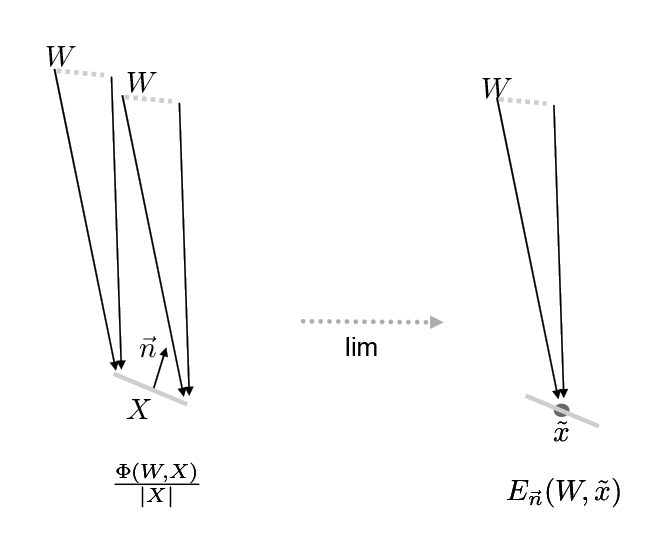

Note that our sensor has a normal . If we divide the radiant flux by the area of the sensor, we can measure the energy density for that area.

which is

If we shrink the area around a single point , this value converges (in the limit) to what we call irradiance:

If the sensor is broken into smaller pieces, the radiant flux over the entire sensor is the sum:

And, considering pointwise irradiance for some area over position :

Why does matter? If in fact our sensor was oriented differently, the amount of energy would be different. This is Lambert’s law - the amount of light arriving at a surface is proportional to cosine .

Irradiance is the area density of flux arriving at a surface. In other words, it is the radiant flux received by a surface per unit area.

Radiance

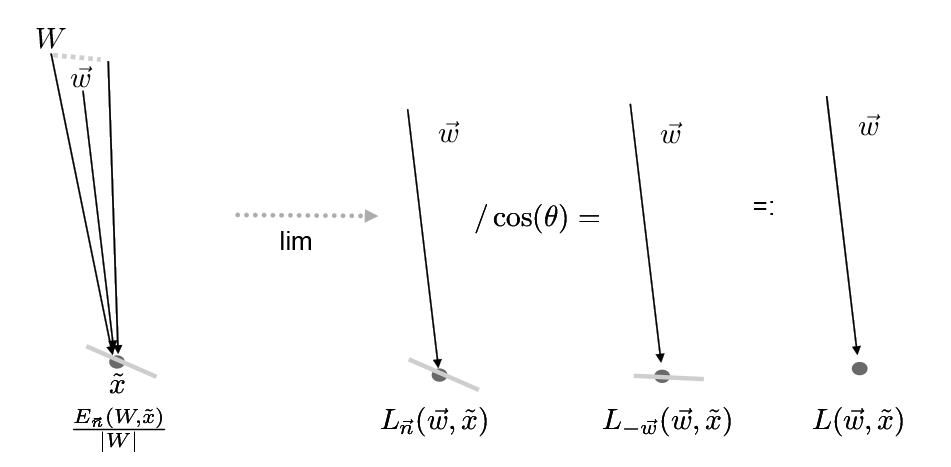

So, we have a light measurement at at point, but we still don’t have “rays”. We want to consider a measurement that does not depend on the wedge solid angle.

Thus arguably one of the most useful measure (in particular with relevance to our ray tracer) is radiance: the flux density per unit area, per solid angle. It’s value is constant along a ray, thus its a “natural quantity to compute with ray tracing”.

To compute radiance we need to divide out the magnitude of (wedge).

This is irradiance divided by steradians.

Measuring smaller and smaller wedges gets us closer to a ray. Under the same assumptions of continuity we have had, this value converges to the radiance measurement:

That is the radiance measurement for a point facing direction (normal) and measuring light coming from direction .

We want to have a measurement that is without regard to the surface normal. Recall Lambert’s law about how much energy a point would receive. Accounting for this, we can write the incoming radiance:

In other words, by dividing by we are considering the surface facing directly towards the incoming light. A surface oriented this way receives all of the incoming illumination, so if we pick this surface, we can consider this simply the light arriving at this point.

A good thing about radiance is that it is constant along a ray in space.

We can also talk about outgoing radiance:

BRDF

But once we have radiance, what we really care about is reflected light, where the reflected light depends on the exact diffusing nature of the material. We’d like to define in general that the reflected light will be some proportion of the incident radiance for some wedge :

Here, is a measurement of outgoing photons - the 1 indicates that these are photons that have bounced off the surface (once). It is in units of radiance.

is a measurement of photons that have been emitted by some light source, but have not yet bounced, that are received from some solid angle onto the point . It is in units of irradiance.

- is the point at which we measure the BRDF.

- is the normal of the surface at that point.

- is the direction in which we are measuring reflected light.

- is the solid angle in which the incoming light approaches the surface.

We can also write this:

And by shrinking to a finite direction , we get:

This is the bidirectional reflectance distribution function, or BRDF. It tells us the ratio of reflected light coming from some direction going in direction hitting the surface which has surface normal .

Note that a purely diffuse surface has BRDF:

with this notation. The or dot product we usually see in our rendering code is accounted for elsewhere. This is consistent because if we view a surface at a grazing angle, fewer photons are reflecting towards us per surface area, but we see more surface area per visual area (sensor area).

Rendering Equation

Once we have this notion of radiance and a BRDF, we can construct an entire reflection equation.

From the rendering equation we know that the amount of outgoing light is a dependant integral of all incoming light:

Which is the BRDF multiplied by the amount of incoming light (dependant of ).

This is similarily written:

With the following parts:

is the outoing light in the direction for a point

is the BRDF, for a point , in direction for incoming light

is the incoming light

is the attenuation of light based on

means we are integrating over all incoming light in the hemisphere

Note that we are only going to concern ourselves with the particle behaviour of light, not the wave-like behaviour.

The Current Situation

Right now in our ray tracers we are essentially pretending we only have incoming light from one single direction (equivalent to following the light forward)

Eventually we want to replace that with multiple sampled s to create a better physical approximation of light.

In the meantime, we also want to just talke a bit more detailed about light and how it is measured, and where this equation comes from.

BSDF

It’s worth pointing out that the BRDF is not sufficient to describe what we have done so far. In order to account for light transmitted through translucent surfaces, we need a BTDF - a bidirectional transmittance distribution function. If we combine these two concepts together - the BRDF and BTDF - you get the bidirectional scattering distribution function (BSDF).

This also does not account for subsurface scattering (whereby light may leave a surface at a different point than where it arrived), but neither will we.